Perspective - (2022) Volume 10, Issue 3

MULTISCALE MODELLING APPLICATIONS IN FOOD ENGINEERING

Cummaudo Mupparapu*Received: Sep 01, 2022, Manuscript No. JHRE-22-77552 ; Editor assigned: Sep 05, 2022, Pre QC No. JHRE-22-77552 (PQ); Reviewed: Sep 19, 2022, QC No. JHRE-22-77552 ; Revised: Sep 27, 2022, Manuscript No. JHRE-22-77552 (R); Published: Oct 05, 2022, DOI: : 10.30876/2347-7393.22.10.205

Description

Although food are essentially complex objects-their organisations, structures, and compositions are first defined by biological processes-food engineering and modelling is frequently more challenging than in other fields. They change quickly while being harvested, transported, and distributed. The content, structure, physical states of the ingredients, degradation, and creation of new chemical compounds are all significantly altered during food preparation. In other words, effective modelling should account for the changes in physicochemical attributes as a function of processing level as well as the impact of supply chain variations and initial biological variability. These qualities are also significantly altered by processing, storage, final preparation, and digestion. Food modelling typically only addresses one aspect of the complexity: the evolution of an averaged food product rather than the diversity between food products is sought. Therefore, the average of the thermodynamic, transport, and mechanical properties over a representative structure and composition is supposed to be constant or dependent on macroscale state variables (e.g., temperature, water content, etc.). In this study makes the case that the implicit paradigm of averaging any component of food transformation may one day be questioned due to the quick advancements in modelling and simulation in other engineering fields, such as materials, polymer science, and soft matter. The search for alternatives to traditional modelling was sparked by the dearth of comprehensive, publicly available databases on mechanistic food attributes.

Since many years, food engineers have tried to use mathematical models to describe physical phenomena like heat and mass transfer that happen in food during unit operations. Foods are arranged hierarchically and contain elements on all scales, from the molecular to the plant level. Food features at the fine scale are typically not explicitly represented, but rather included through averaging processes into models that function at the coarse scale in order to reduce computational complexity. As a result, finegrained understanding of the processes at the microscale is lost, and physical parameters are revealed rather than the coarse-scale model parameters. The use of sophisticated mathematical models in the food sector is still in its infancy because it is impracticable to assess these characteristics for the vast majority of foods that are now consumed. Multiscale modelling, a new modelling paradigm, has emerged that might solve these issues. Multiscale models are essentially a hierarchy of sub-models that connect to each other in order to represent the behaviour of materials at various spatial scales.

Food engineers have tried to create mathematical models of food processes since the early work of Ball (1923) to model heat transfer during sterilisation, either to improve their understanding of the physical phenomena that occur during food processing or to design new or optimise existing food processes. Different modelling approaches, ranging from being entirely observation-based to entirely physics-based, are used depending on the complexity: Polynomial models are typically used to describe simple relationships between variables, such as sweetness as perceived by a human expert and the food’s sugar content; Ordinary differential equations are used to model variables that change over time, such as the inactivation of microorganisms during pasteurisation, and partial differential equations of mathematical physics are used to model variables that change over both time and space, such as the temperature and moisture field inside a potato chip during frying. The latter are challenging to solve since, aside from simple geometries and boundary conditions, there is typically no known closed form analytical solution, necessitating the use of numerical techniques to determine an approximation of the governing equations’ solution. The majority of commercial codes can be customised to the needs of the process engineer through user routines, and all of them feature pre-processing facilities that enable specifying complex geometries. Several of these algorithms also offer so-called Multiphysics capabilities since many physical processes, such as heat and mass transfer and thermo-hygro-elasticity deformation, are naturally connected.

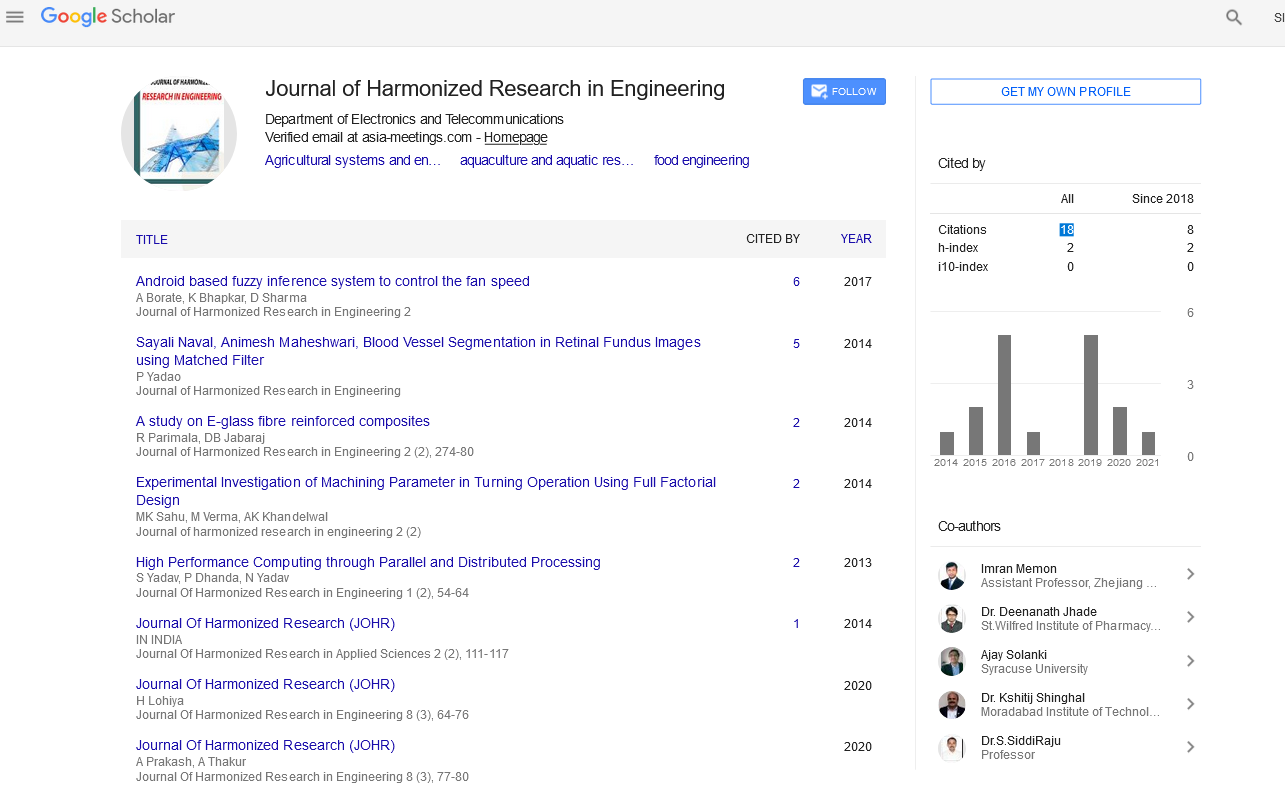

Google Scholar citation report

Citations : 43

Journal of Harmonized Research in Engineering received 43 citations as per google scholar report